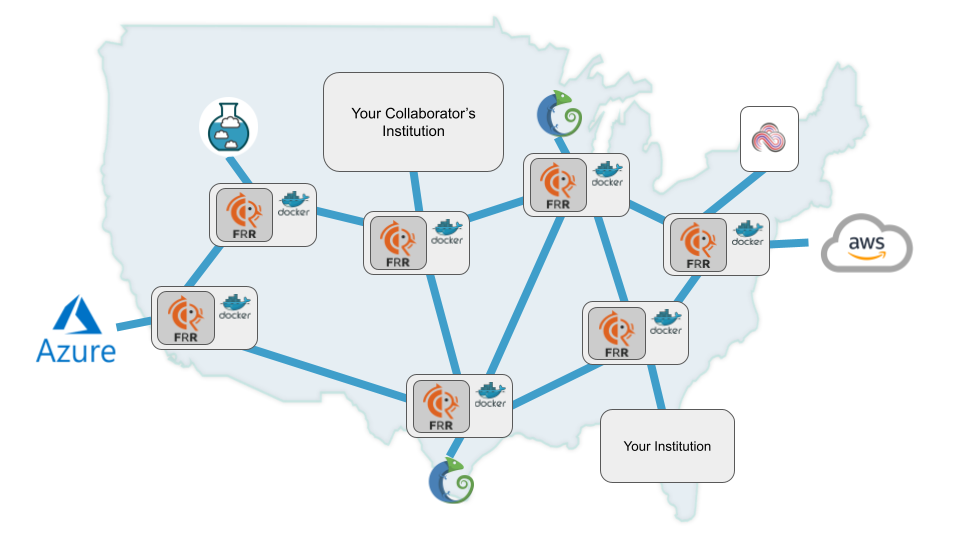

What if you could combine Chameleon's bare-metal GPU servers with FABRIC's programmable network fabric — and access the GPU over a private network without ever assigning a public IP? That's exactly what Chameleon's stitch port feature enables, and we've published a Trovi artifact that demonstrates the full workflow end to end.

The artifact provisions an RTX 6000 GPU server on Chameleon, connects it to a FABRIC slice over fabnetv4, installs Ollama with a DeepSeek-R1 model on the GPU, and queries the LLM from a FABRIC node — all through the private stitched network. You can use it as-is to run LLM inference, or adapt it as a starting point for your own cross-testbed experiments.

Why Stitch Ports?

Normally, accessing a Chameleon server from an external network requires a floating IP and exposing the server to the public internet. Stitch ports change that equation: when you reserve a Chameleon node with FABRIC as the stitch-port provider, the server gets a private IP on FABRIC's fabnetv4 network. A FABRIC node on the same network can then reach the Chameleon server directly — no public exposure, no firewall gymnastics for the network path between them.

This is especially valuable for GPU workloads. You get Chameleon's bare-metal GPU performance with FABRIC's network programmability, and you can orchestrate both sides from a single notebook.

What the Artifact Does

Find the artifact on Trovi at https://trovi.chameleoncloud.org/dashboard/artifacts/9b738237-f9ac-4a4b-9bc5-5f4bebbf9a04.

The notebook walks through five stages:

- Reserve a Chameleon GPU node with FABRIC as the stitch-port provider, selecting a GPU type (e.g.,

gpu_rtx_6000) and scheduling the lease. - Create the Chameleon server on the reserved node, connected to the

fabnetv4network and booting aCC-Ubuntu20.04image. - Create a FABRIC slice with a compute node at the same site (TACC), attached to

fabnetv4. - Install GPU drivers and Ollama on the Chameleon server — the notebook SSHs from the FABRIC node to the Chameleon server over the private network to run the setup scripts.

- Query the LLM from the FABRIC node by POSTing to Ollama's HTTP API on the Chameleon server's private IP.

Here's what the final query looks like — a simple curl from the FABRIC node to the Chameleon GPU server:

curl -X POST http://10.191.131.85:11434/api/generate \

-H "Content-Type: application/json" \

-d '{

"model": "deepseek-r1:8b",

"prompt": "Tell me a joke about computer networks.",

"stream": false

}'And it works — the response comes back over the private fabnet link with sub-millisecond ping latency between the two nodes:

PING 10.191.131.85 (10.191.131.85) 56(84) bytes of data.

64 bytes from 10.191.131.85: icmp_seq=1 ttl=63 time=0.121 ms

64 bytes from 10.191.131.85: icmp_seq=2 ttl=63 time=0.105 ms

64 bytes from 10.191.131.85: icmp_seq=3 ttl=63 time=0.110 msPrerequisites

Before running the notebook, you'll need accounts and credentials on both testbeds:

Chameleon Setup

- A Chameleon Cloud account with an active project.

- A key pair created at your experiment site (e.g., CHI@TACC) under Project > Compute > Key Pairs. Download the private key — you'll need it in the notebook.

- A GPU lease reservation with FABRIC selected as the stitch-port provider. Check the host calendar for GPU availability and note the reservation ID.

FABRIC Setup

- A FABRIC account with portal access.

- FABRIC credential files in your config directory: bastion keys, slice keys,

fabric_rc, andssh_config. - A fresh token from the FABRIC Credential Manager (Experiments > Manage Tokens), saved as

id_token.json.

Key Steps in the Notebook

Provisioning the Chameleon Server

The notebook uses Chameleon's chi Python bindings to create a bare-metal GPU server on the reserved lease, connected to the fabnetv4 network:

chi.server.create_server(

server_name,

reservation_id=chi_reservation_id,

network_name='fabnetv4',

image_name='CC-Ubuntu20.04',

key_name=chi_key_file)

chi.server.wait_for_active(server.id)

chi_server = chi.server.get_server('chi_fab_gpu_1')

fixed_ip = chi_server.addresses['fabnetv4'][0]['addr']The server's IP on fabnetv4 is a private address — not reachable from the public internet, but directly accessible from any FABRIC node on the same network.

Creating the FABRIC Slice

On the FABRIC side, the notebook uses fablib to create a slice with a single node attached to fabnetv4:

slice = fablib.new_slice(name='My-Fabric-Chameleon-GPU')

node = slice.add_node(name='node1', site='TACC')

node.add_fabnet()

slice.submit()Once both sides are up, the FABRIC node can reach the Chameleon server over the private network. The notebook uploads the Chameleon SSH key to the FABRIC node and uses it as a jump host to install software and run commands on the GPU server.

Running LLM Inference

After installing NVIDIA drivers and Ollama on the Chameleon GPU server, the notebook pulls a model (deepseek-r1:8b) and configures Ollama to listen on all interfaces. From there, the FABRIC node can query the model directly over HTTP through the private fabnet link.

Adapting the Artifact

The notebook is designed as a starting point. Here are a few ways you might adapt it:

- Swap the GPU type: Change the reservation to a different GPU (e.g., A100, V100) depending on your workload and availability.

- Run a different model: Replace

deepseek-r1:8bwith any model Ollama supports — or skip Ollama entirely and install your own inference framework. - Add more FABRIC nodes: Scale the slice to multiple nodes that all query the same GPU server, useful for benchmarking or distributed workloads.

- Change the site: The artifact uses TACC, but you can target any Chameleon site that supports FABRIC stitch ports.

- Build on the cross-testbed pattern: The general approach — Chameleon for compute, FABRIC for network — applies beyond LLMs. Any workload that benefits from bare-metal GPU access and programmable networking can use this same stitch-port setup.

Tips and Gotchas

- Reservation timing: GPU resources are in high demand. Check the host calendar and reserve ahead of time. Once your lease starts, begin using the resources promptly to avoid reclamation.

- Driver and model installs take time: CUDA drivers and large model downloads can take many minutes on bare-metal. Plan for this in your workflow.

- Key management: The notebook uploads a Chameleon private key to the FABRIC node for SSH access. This is convenient for experimentation but should be handled carefully — consider ephemeral keys for longer-running or shared experiments.

- Chi library versions: The

chiPython bindings evolve across versions. If you encounter missing methods (e.g.,interface_list), use the server'saddressesattribute directly as shown in the notebook. - Firewall configuration: To expose Ollama's API port on the Chameleon server, the notebook opens TCP port 11434 via

firewalld. Adjust this if your application uses different ports.

Get Started

The full notebook is available as a Trovi artifact. Clone it, fill in your credentials and reservation details, and run through the cells to have a GPU-backed LLM endpoint accessible from FABRIC's network — all without a single public IP address.