This guide is written primarily for AE organizers planning to use Chameleon for their evaluation, though authors and reviewers will find the workflows section relevant to their own preparation.

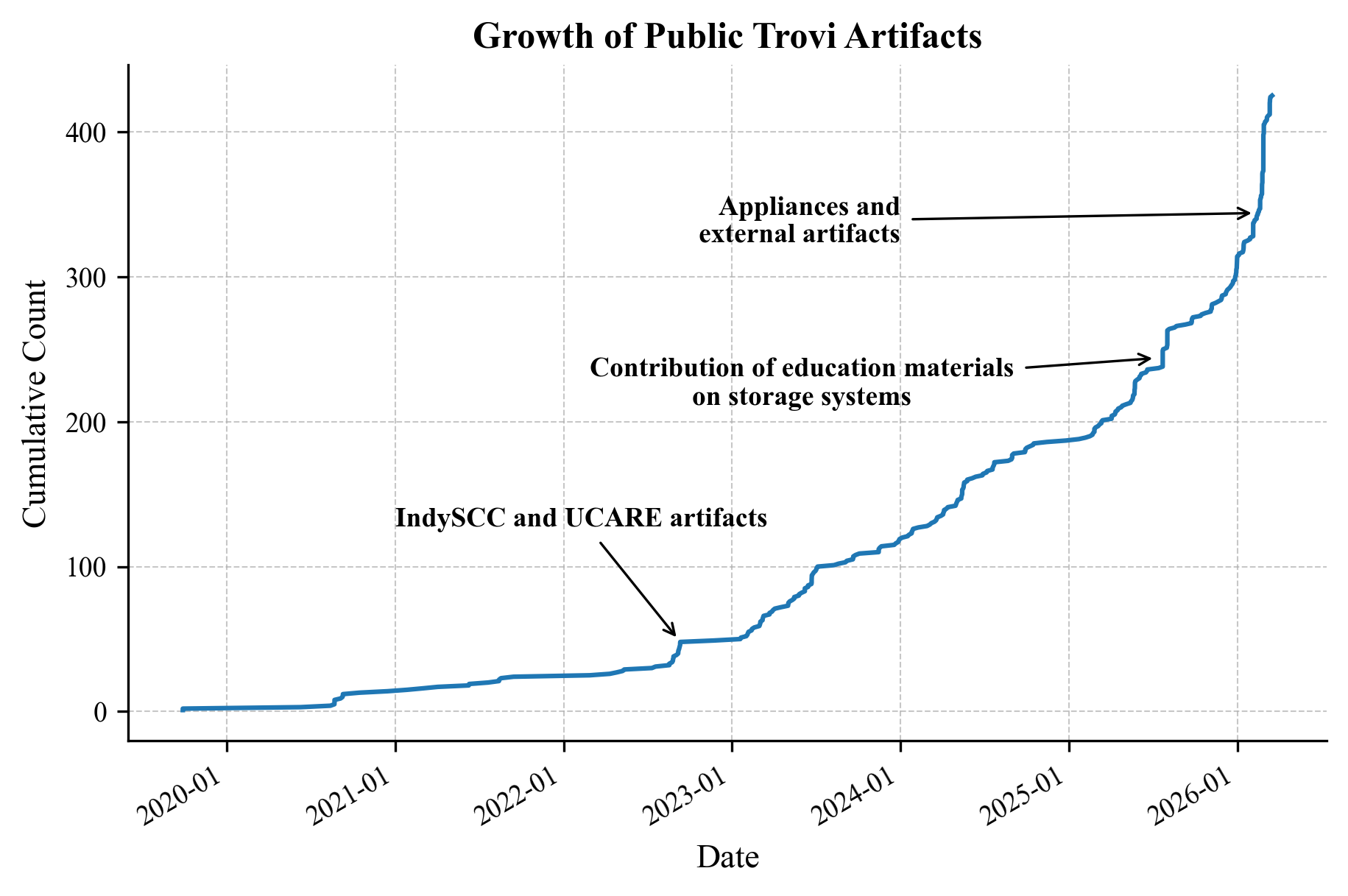

The vision of practical reproducibility, where published experiments can be re-run, extended, and built upon as naturally as they can be read about, has been gaining real traction in the computer science research community. One measure of that is the growth of Trovi, an experiment sharing platform integrated with shared infrastructure like Chameleon: of roughly 425 public artifacts currently hosted, 364 have been added by you, our users, since 2023. Those artifacts aren't just sitting idle either: on average, a user-uploaded artifact is launched around 60 times per year by about 16 unique users, and authors continue to actively maintain them, releasing at least 2 additional versions after initial upload on average. This suggests researchers are treating Trovi less as an archive and more as a living platform for infrastructure-integrated science, which is exactly the kind of engagement that makes reproducibility meaningful in practice.

Figure 1. Cumulative growth of public artifacts on Trovi from 2020 to early 2026. Key inflection points are annotated on the graph.

Conferences and journals contribute to this growing supply of reproducible artifacts by hosting artifact evaluations (AEs), which challenge authors to package their research in a reproducible manner and award badges to artifacts based on reviewer feedback. These venues have been using Chameleon to supply resources for AE authors and reviewers since at least 2021, and over the past few years — through the NSF-funded REPETO project — we've significantly expanded that partnership. To date, we've supported reproducibility initiatives at more than 30 events across 16 major HPC and systems conferences, including SC, MLSys, SOSP, OSDI, FAST, EuroSys, and ATC, among others. That experience has given us a clear picture of what makes evaluations on shared infrastructure go smoothly, and what tends to derail them. This blog seeks to disseminate those lessons more systematically.

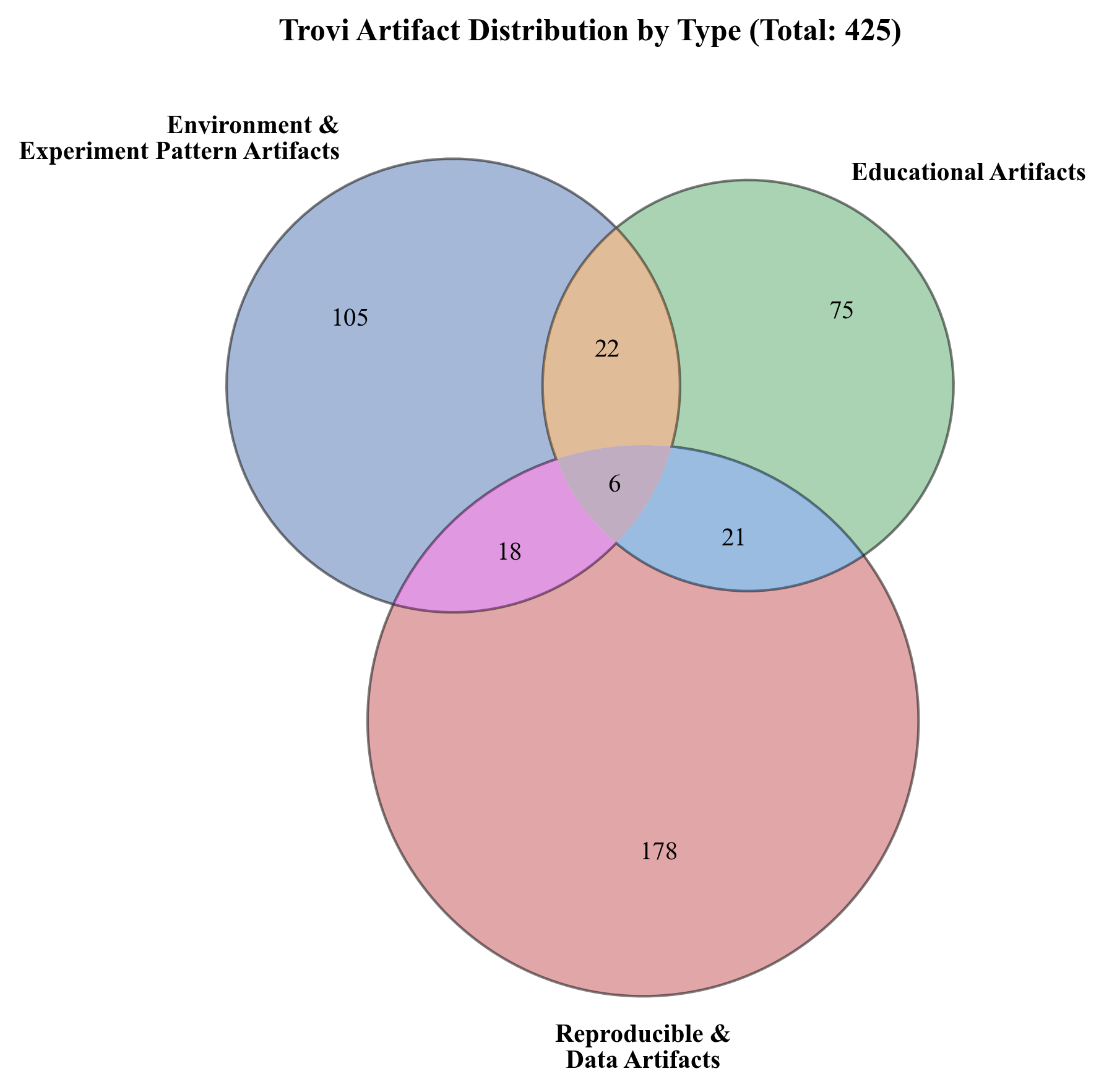

Figure 2. Distribution of 425 public Trovi artifacts across three category groups — environment & experiment, educational, and reproducible & data artifacts — and their intersections. Categories were assigned automatically by Google Gemini 1.5 Pro in deterministic mode based on artifact titles and descriptions; author-supplied tags were not used, as many artifacts have none assigned. Overlapping regions reflect artifacts Gemini classified into multiple categories. These counts are hypothesis-generating and have not been validated against human judgment or other reference standards.

Quick Reference: AE Organizer Checklist

Below is a short reference for AE organizers planning to use Chameleon for evaluation summarizing the key tips of this blog. You can read more detailed guidance on specific checklist items in the sections that follow.

6+ weeks before the evaluation:

- Request PI eligibility on Chameleon if you don't already have it

- Survey authors on hardware requirements; share the resource discovery page and available base images (see survey example here)

- Make advance reservations for high-demand nodes based on survey responses and AE timelines

- Create separate Chameleon projects for authors and reviewers

- Set per-user SU budgets on both projects

Author preparation phase:

- Add authors to project as survey responses come in

- Schedule an author office hours session covering the availability calendar, base images, and a Chameleon walkthrough

- Communicate any unsupported hardware requirements early and work with author and/or the Chameleon team on alternatives

- Authors build, test, and snapshot their environments; package as Jupyter notebooks on Trovi where feasible

Review phase:

- Add reviewers to their project; schedule a separate reviewer onboarding session

- Encourage shared environments per artifact and short lease lengths (1–2 days)

- Set a hardware reservation deadline for reviewers based on author requirements ahead of any period of high activity

Post-evaluation:

- Encourage authors to publish their artifacts to Trovi and Zenodo

- Share the DayPass option with authors for future replication requests

Why Chameleon for AE?

The core reason Chameleon works well for AE is that it gives reviewers access to the same experiment environment the authors used. Chameleon offers a wide range of configurable resources, from bare metal nodes with specialized hardware like A100 and H100 GPUs, FPGAs, and custom storage configurations; to virtual machines for experiments that don't require dedicated hardware; to containers for experimentation in the edge to cloud continuum — making it possible to support a broad variety of systems experiments. Critically, authors can snapshot their configured environments so that reviewers deploy the exact same software stack in a single step, eliminating the most common source of setup friction. Advance reservations let authors and reviewers lock in needed hardware before the evaluation window opens. Packaged experiments can be shared via Trovi, an infrastructure-integrated experiment repository, where they remain accessible long after the evaluation closes for teaching, follow-on research, or future replication. For authors who want to extend access beyond the reviewer pool, DayPass allows anyone to reproduce a result without needing a full Chameleon account.

That said, Chameleon is a shared research testbed, not a dedicated AE platform, and it has real constraints. High-demand nodes are often booked out long in advance. Not every hardware configuration researchers might need is available. And like any shared system, it requires some coordination to use well. The rest of this guide is about how to do that coordination effectively.

Planning Your AE: The Steps That Matter Most

The most common issue we encounter with AEs is hardware availability. GPU nodes on Chameleon are frequently booked out three weeks or more in advance, and an evaluation window that opens with authors and reviewers scrambling for resources that aren't available is a frustrating experience for everyone. Almost all of this is preventable with a bit of upfront work.

Set up projects and user access. Organizers will need PI status on Chameleon before they can create a project — if you don't already have this, request it early, as approval can take a couple days. We recommend creating separate projects for authors and reviewers to keep allocations clean and, where anonymity matters, to avoid accidental cross-visibility. Chameleon supports bulk member addition and a PI delegate feature for distributing the management workload. Setting a per-user SU budget at the project level is also worth doing early. Doing so prevents any single user from inadvertently consuming a disproportionate share of the allocation.

Survey requirements from authors early. Before the evaluation window opens, send a short survey to accepted authors asking about their hardware requirements: specifically whether they need bare metal, what GPU configurations they expect to use, and roughly how long their experiments take to run. This doesn't need to be elaborate; even a simple Google Form will do. (Click here to view a sample template that you can use as a starting point.) The goal is to surface demand before it becomes contention. Point authors to Chameleon's resource discovery page and share the list of available base images (including CUDA and other ML-ready environments) so they can assess fit before responding. The survey also serves as your primary tool for identifying hardware requirements that Chameleon can't support, e.g., configurations like multi-node NVLink setups or very large GPU counts may exceed what's available. If you spot gaps early, bring them to the Chameleon team; with enough lead time, we can often work with partner infrastructure providers to find alternatives.

In cases where the timeline is tight and you can't gather author requirements far enough in advance, a useful fallback is to reserve high-demand nodes as a block on the calendar based on your best estimate of demand. As the evaluation window approaches, you can release that block and let authors and reviewers make their own reservations. This isn't a perfect solution, as it doesn't prevent other users from grabbing those resources when you release them again for the AE, but it buys time and keeps options open. If you use this approach, communicate the release timing clearly to participants so they know when to log on and make reservations. And in either case: remind authors and reviewers to reserve only what they actually need. A two-hour experiment doesn't warrant a two-day lease.

Make reservations before the evaluation windows open. Once you have a picture of hardware demand, make advance reservations for high-demand nodes as early as possible, ideally as soon as the evaluation timeline is known. Standard leases run up to one week and you can make multiple leases for node types with sufficient capacity (but be sure to avoid any lease stacking); if your evaluation requires longer continuous access to less-contested resources, discuss this with the Chameleon team by opening a Help Desk ticket. It's also worth setting a deadline for authors and reviewers to make their own reservations ahead of any period of high activity. If reviewers are active starting July 14, for example, having them reserve hardware by June 14 ensures no one arrives on day one without access and no availability for weeks.

Schedule a Chameleon webinar or office hours for onboarding. We recommend scheduling separate sessions for authors and reviewers; both groups benefit from a live walkthrough and can have different needs. An author session should cover the availability calendar, base images, the snapshotting workflow, and Trovi packaging. A reviewer session can focus on accessing a pre-built environment and what to expect from a well-packaged artifact. The Chameleon team is happy to co-facilitate these sessions. Reach out via the Help Desk to coordinate timing.

Workflows During the AE

Following some or all of the tips above should ensure that, come time for reviewers to evaluate authors' artifacts, everyone has hardware available for running experiments. Below, we discuss a few more suggestions to assist authors and reviewers with workflows once they are using Chameleon resources during the AE.

(A more extensive description of these guidelines is available in our Community Report on the Challenges for Practical Reproducibility in HPC and Systems Research.)

Authors. Before the evaluation window opens, encourage authors to build and test their artifacts on Chameleon rather than waiting for reviewers to surface problems. The typical workflow is to start from a Chameleon base image, i.e., CUDA, TensorFlow, or another pre-configured environment, customize it for the experiment, and then snapshot the result so reviewers can deploy it without any setup work. Where feasible, packaging the experiment as a Jupyter notebook and sharing it via Trovi is worth encouraging. It gives reviewers a self-contained, launchable artifact rather than a set of instructions to follow. See examples of Trovi artifacts packaged for other conference AEs here.

Reviewers. Where possible, reviewers of the same artifact should share a single deployed environment rather than each spinning up their own. This reduces resource consumption and surfaces environment issues collaboratively rather than in parallel. Encourage reviewers to coordinate timing: one reviewer deploys, others reproduce results within the same environment. Keep lease lengths short (one to two days is usually sufficient) to keep resources flowing across the reviewer pool rather than sitting idle.

Beyond the Evaluation

The value of a well-packaged artifact doesn't have to end when the evaluation closes. Experiments shared via Trovi remain publicly accessible and launchable long after the evaluation window closes, which can be useful for teaching, follow-on research, and future replication. Trovi's recently updated dashboard makes it easier to manage artifacts over time, including improved GitHub integration so authors can keep artifacts in sync with their repositories as experiments evolve. From Trovi, authors can also publish to Zenodo to obtain a citable DOI for long-term archival. For authors who receive replication requests after the evaluation, DayPass allows them to grant one-off access to anyone without requiring a full Chameleon account, serving as a lightweight way to keep artifacts alive and useful well beyond the conference.

Additional Resources

- Using Chameleon for Artifact Evaluation — the original 2021 guide

- Interactive Science Made Easy with Chameleon DayPass

- How to Organize Artifact Evaluations with Shared Infrastructure — webinar by Bogdan Stoica (January 2026)

- Community Report on Practical Reproducibility in HPC — SC24 workshop report

- Chameleon Hardware Catalog

- Chameleon Project Management Documentation

- Trovi Artifact Repository